Even if Calvin finds the correct, feasible tour traversing the 23+ 'cities', it is never going to be Hobbes-optimal.

(pic source: twitter)

Showing posts with label TSP. Show all posts

Showing posts with label TSP. Show all posts

Saturday, November 29, 2014

Tuesday, March 4, 2014

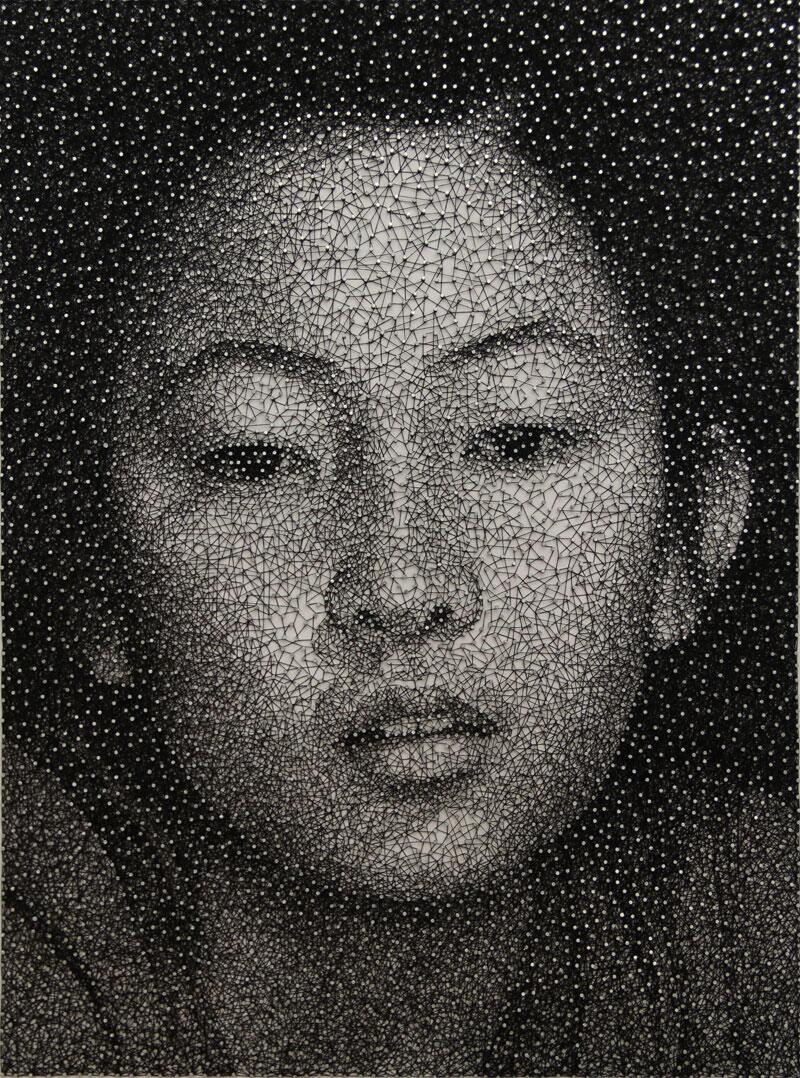

Traveling Salesman Problem in a Portrait?

I'd planned to stop blogging until May this year to do my small bit in helping Narendra Modi become the next Prime Minister of India in the Indian general elections to be held very soon - one that will determine the future of my family there, as well as the destiny of 1.2 Billion Indians. India has suffered from a curse of culpable silence in the last ten years, but now it seems, that is changing, thanks to inspiring examples like these that asks people to come out and 'Vote for India' (thanks to @sarkar_swati, faculty at U-Penn, for sharing this video).

------------------------------------------------------------------------------

By sheer coincidence, this brief blog, like the previous one, is related to Asian (Japanese) art, and one i felt 'compelled' to do. Thanks to @SimoneCerbolini for sharing this beautiful portrait on twitter. What is interesting about this art is that it was done, as Simone tweets, using "a single thread wrapped around thousands of nails. Artwork "Mana" by Kumi Yamashita"

The artist, Kumi Yamashita, has a facebook page, and here is another picture from there.

The effect is stunning, and one marvels at the human 'cognitive shift' that convinces us that within this collection of thread and nails, is a lady. Here is a brilliant talk by neuroscientist V. S. Ramachandran (Director, Center for Brain and Cognition, UC, San Diego) on 'Aesthetic Universals and the Neurology of Hindu Art' that explains this in depth.

From the operations research perspective, we gravitate toward the mathematical problem hidden in this portrait: determining the least length of thread to traverse through all these nails. A mathematical optimization model that can be used to answer this question is the celebrated 'Traveling Salesman Problem' (TSP), which is known to be difficult to solve, in theory. In practice, however, extremely large instances have been solved to provable optimality.

Here is another page that displays a collection of pictures from the artist's 'constellation series'. It also includes this ultra-close up that lets us see how the threading really progresses at the micro-level.

Each nail is traversed multiple times, and a greater density of thread is used to create a darker shade (e.g. the eye and the brow). Additionally, there appears to be a greater density of nails in that area. Can a thread-traversal path generated by a TSP solver (e.g. concorde) produce a similar effect to the eye and the brain? I'm not sure, although it may still produce 'a reasonable picture of a lady'. If the density of nails is increased in these areas, then perhaps the TSP-artwork may do a good job. Alternatively, it may be possible to modify the TSP network structure to induce such an effect.

------------------------------------------------------------------------------

By sheer coincidence, this brief blog, like the previous one, is related to Asian (Japanese) art, and one i felt 'compelled' to do. Thanks to @SimoneCerbolini for sharing this beautiful portrait on twitter. What is interesting about this art is that it was done, as Simone tweets, using "a single thread wrapped around thousands of nails. Artwork "Mana" by Kumi Yamashita"

The artist, Kumi Yamashita, has a facebook page, and here is another picture from there.

The effect is stunning, and one marvels at the human 'cognitive shift' that convinces us that within this collection of thread and nails, is a lady. Here is a brilliant talk by neuroscientist V. S. Ramachandran (Director, Center for Brain and Cognition, UC, San Diego) on 'Aesthetic Universals and the Neurology of Hindu Art' that explains this in depth.

From the operations research perspective, we gravitate toward the mathematical problem hidden in this portrait: determining the least length of thread to traverse through all these nails. A mathematical optimization model that can be used to answer this question is the celebrated 'Traveling Salesman Problem' (TSP), which is known to be difficult to solve, in theory. In practice, however, extremely large instances have been solved to provable optimality.

Here is another page that displays a collection of pictures from the artist's 'constellation series'. It also includes this ultra-close up that lets us see how the threading really progresses at the micro-level.

Each nail is traversed multiple times, and a greater density of thread is used to create a darker shade (e.g. the eye and the brow). Additionally, there appears to be a greater density of nails in that area. Can a thread-traversal path generated by a TSP solver (e.g. concorde) produce a similar effect to the eye and the brain? I'm not sure, although it may still produce 'a reasonable picture of a lady'. If the density of nails is increased in these areas, then perhaps the TSP-artwork may do a good job. Alternatively, it may be possible to modify the TSP network structure to induce such an effect.

Monday, December 9, 2013

Optimize Your In-store Holiday Shopping Route

Remember the last time you were not tired after holiday shopping in a busy mall, bulk shopping at a discount-club store, or weekend shopping at a crowded grocery store?

(source links: http://info.museumstoreassociation.org, http://binaryapi.ap.org)

Hopefully, the simple and preliminary 'operations research (O.R)' analysis in this post can help make the experience a little less stressful and maybe even let us enjoy a bit of retail therapy that we can't get online. The shopping optimization problem addressed here is simple one, albeit with a twist. This post was triggered by an observation made after recent shopping expeditions to a nearby Costco outlet.

We have a long shopping list of L line-items (i) of various quantities N(i) that we have to pick up in a store. How should we optimally reorder our shopping list to reflect the sequence in which we pick up these items?

Version 1

The simple version aims to minimize total shopping time. For example, we can formulate this as an instance of the so-called traveling salesman problem (TSP) that finds the shortest sequence that starts at the entrance, visits each of the L 'cities' once, then the checkout counter, and ends at the store exit (entrance). A google search shows this 2009 research paper by researchers at UPenn, which shares the technical details and interesting results for this version.

To simplify our problem, we assume that the topology of the store is prior knowledge, and we can roughly pre-assign items in the same aisle to the same 'city'. The resultant problem is to find the order of visits to the aisles of interest to us. If the aisles are neatly arranged as node intersections in a rectangular grid, then we simply have to find the shortest rectilinear "Manhattan" distance tour in this grid. Depending on the type of the store, the store-layout itself may be optimized by the retailer based on the observed foot-traffic and 'heat-map' data. For example, it may be designed to retain the customer in the system to increase chances of purchase (exploratory tour, store selling expensive luxury/fashion products), or quickly out of the system (routine tour, obligatory products like groceries), or based on some other goal. Store space and layout problems are known to be commercially useful optimization problems in the retail industry.

Picture linked from an iTunesApp (Concorde) page of Prof. Bill Cook that solves TSPs :)

Version 2

Let us additionally assume that product attributes have an impact on our order. Suppose one or more items in our list is located in the frozen section at a grocery store, then we may prefer to get there at the end of our tour. Luckily, many stores appear to anticipate this (?), and locate this section towards the end of the store. Thus if 'maximum freshness' or 'minimum damage' is an additional consideration, this may alter the TSP route and the final ordering of items in our shopping list.

Version 3

This was the problem that was of immediate interest to me today. Costco sells stuff in bulk, so the items tend to be heavy, and considerable energy is expended moving the cart through the store. At the risk of mangling undergrad physics, let us proceed with our analysis...

Let μ be the (constant) coefficient of rolling friction between the wheels of the shopping cart and the store-floor. Then the work required to move mass m through distance d = force x displacement =μmgd, where g is the (constant) acceleration due to gravity. Thus a squeaky-wheeled cart will make us work extra hard. Speed of shopping is a consideration if we want to keep track of power (force x velocity). Covering the same tour length in half the time requires twice the power, i.e. the rate at which our body expends stored energy.

Carry versus Cart (reinventing the wheel)

If we had zero rolling friction, technically the only work involved is in lifting items and putting them in our cart. We can assume that this work is independent of our order of visit and is a fixed quantity. On the other hand, if we are not working with a wheeled-cart, but carry our stuff in a market-basket or shopping bags, then we have to raise the potential energy of the items to height 'h' (mgh) all the way until checkout, and also overcome the sliding friction between our shoes and the floor, which would probably be higher than the rolling friction, as illustrated below.

(link source http://static8.depositphotos.com)

Why is a shopping experience increasingly stressful over time?

As we add items to our cart, the work done per unit time (power) required increases. In the continuous case, this problem closely resembles our 'optimal snow shoveling problem' analyzed during a February snow storm. The accumulated objective function cost there increases as the square of the distance. Here, we look at the discrete situation. When we visit city 'i' to pick up N(i) items each having mass m(i), we instantaneously add mass N(i) * m(i) that we have to lug around until checkout. After picking up k-1 items, my total work done will approximately be:

μg * sum (i = 0,... k-1) W(i) * d(i, i+1), where i = 0, represents the store entrance, m(0) represents the mass of an empty shopping cart, and

W(i) = sum(j = 0, .., i) N(j) * m(j) = total mass carried from city i to i+1.

In other words, the 'cost' accrued while traversing any pair of cities also depends on the items we picked up earlier, i.e., it is no longer memory-less and varies depending on the cities visited earlier.

Result: our shopping gets increasingly more tiring over time

If one of the items in our shopping list involve a heavy item, then our optimal solution may no longer be the shortest time tour. We now have to solve a minimum-effort TSP. Operations Researchers have also looked at methods for solving a path-dependent TSP in the past. A simple heuristic approach would be to break the trip into two tours. The earliest items stay with us the longest, so we first find the optimal sequence through the light items, followed by the shortest tour through the heavy items. We also have to organize the shopping cart carefully enough to ensure that our light items do not get squished by heavy products, and our ice-cream doesn't melt. I'm sure there are better algorithms than the one provided here.

Recommendation

If we want to burn calories while shopping then a good way to do that, based on our previous discussion is (a) carry our items, (b) pick up the heaviest items first, and (c) take the longest route to maximize energy expenditure (d) walk briskly. This health-and-fitness article provides ten tips for exercising that includes these ideas. For the rest of us who are looking to minimize effort, we simply do the opposite:

We sort our shopping list items in decreasing order of expected weight (frozen stuff goes to the bottom too), while also ensuring that the aisles of adjacent items in the list are close to each other, and we are not revisiting aisles or zig-zagging much. Some swapping may be required to find improved sequences. Many of us have probably evolved into efficient shoppers over time, and naturally take these steps and more, but if there an opportunity for improvement that the 'science of better' can uncover for us to make our shopping less stressful, let's take it.

Happy Holidays!

Update 1: December 17, 2013

IBM Smarter Retail article says:

"..... Most recently, Lowe’s has offered the customer a product location functionality that is integrated with their wish list. According to Ronnie Damron, .....Whether customers are browsing the store for ideas or searching for a specific item in a hurry, we think the Product Locator feature will simplify the shopping process, creating a better experience that encourages customers to come back again and again.”

(source links: http://info.museumstoreassociation.org, http://binaryapi.ap.org)

Hopefully, the simple and preliminary 'operations research (O.R)' analysis in this post can help make the experience a little less stressful and maybe even let us enjoy a bit of retail therapy that we can't get online. The shopping optimization problem addressed here is simple one, albeit with a twist. This post was triggered by an observation made after recent shopping expeditions to a nearby Costco outlet.

We have a long shopping list of L line-items (i) of various quantities N(i) that we have to pick up in a store. How should we optimally reorder our shopping list to reflect the sequence in which we pick up these items?

Version 1

The simple version aims to minimize total shopping time. For example, we can formulate this as an instance of the so-called traveling salesman problem (TSP) that finds the shortest sequence that starts at the entrance, visits each of the L 'cities' once, then the checkout counter, and ends at the store exit (entrance). A google search shows this 2009 research paper by researchers at UPenn, which shares the technical details and interesting results for this version.

To simplify our problem, we assume that the topology of the store is prior knowledge, and we can roughly pre-assign items in the same aisle to the same 'city'. The resultant problem is to find the order of visits to the aisles of interest to us. If the aisles are neatly arranged as node intersections in a rectangular grid, then we simply have to find the shortest rectilinear "Manhattan" distance tour in this grid. Depending on the type of the store, the store-layout itself may be optimized by the retailer based on the observed foot-traffic and 'heat-map' data. For example, it may be designed to retain the customer in the system to increase chances of purchase (exploratory tour, store selling expensive luxury/fashion products), or quickly out of the system (routine tour, obligatory products like groceries), or based on some other goal. Store space and layout problems are known to be commercially useful optimization problems in the retail industry.

Picture linked from an iTunesApp (Concorde) page of Prof. Bill Cook that solves TSPs :)

Version 2

Let us additionally assume that product attributes have an impact on our order. Suppose one or more items in our list is located in the frozen section at a grocery store, then we may prefer to get there at the end of our tour. Luckily, many stores appear to anticipate this (?), and locate this section towards the end of the store. Thus if 'maximum freshness' or 'minimum damage' is an additional consideration, this may alter the TSP route and the final ordering of items in our shopping list.

Version 3

This was the problem that was of immediate interest to me today. Costco sells stuff in bulk, so the items tend to be heavy, and considerable energy is expended moving the cart through the store. At the risk of mangling undergrad physics, let us proceed with our analysis...

Let μ be the (constant) coefficient of rolling friction between the wheels of the shopping cart and the store-floor. Then the work required to move mass m through distance d = force x displacement =μmgd, where g is the (constant) acceleration due to gravity. Thus a squeaky-wheeled cart will make us work extra hard. Speed of shopping is a consideration if we want to keep track of power (force x velocity). Covering the same tour length in half the time requires twice the power, i.e. the rate at which our body expends stored energy.

Carry versus Cart (reinventing the wheel)

If we had zero rolling friction, technically the only work involved is in lifting items and putting them in our cart. We can assume that this work is independent of our order of visit and is a fixed quantity. On the other hand, if we are not working with a wheeled-cart, but carry our stuff in a market-basket or shopping bags, then we have to raise the potential energy of the items to height 'h' (mgh) all the way until checkout, and also overcome the sliding friction between our shoes and the floor, which would probably be higher than the rolling friction, as illustrated below.

(link source http://static8.depositphotos.com)

Why is a shopping experience increasingly stressful over time?

As we add items to our cart, the work done per unit time (power) required increases. In the continuous case, this problem closely resembles our 'optimal snow shoveling problem' analyzed during a February snow storm. The accumulated objective function cost there increases as the square of the distance. Here, we look at the discrete situation. When we visit city 'i' to pick up N(i) items each having mass m(i), we instantaneously add mass N(i) * m(i) that we have to lug around until checkout. After picking up k-1 items, my total work done will approximately be:

μg * sum (i = 0,... k-1) W(i) * d(i, i+1), where i = 0, represents the store entrance, m(0) represents the mass of an empty shopping cart, and

W(i) = sum(j = 0, .., i) N(j) * m(j) = total mass carried from city i to i+1.

In other words, the 'cost' accrued while traversing any pair of cities also depends on the items we picked up earlier, i.e., it is no longer memory-less and varies depending on the cities visited earlier.

Result: our shopping gets increasingly more tiring over time

If one of the items in our shopping list involve a heavy item, then our optimal solution may no longer be the shortest time tour. We now have to solve a minimum-effort TSP. Operations Researchers have also looked at methods for solving a path-dependent TSP in the past. A simple heuristic approach would be to break the trip into two tours. The earliest items stay with us the longest, so we first find the optimal sequence through the light items, followed by the shortest tour through the heavy items. We also have to organize the shopping cart carefully enough to ensure that our light items do not get squished by heavy products, and our ice-cream doesn't melt. I'm sure there are better algorithms than the one provided here.

Recommendation

If we want to burn calories while shopping then a good way to do that, based on our previous discussion is (a) carry our items, (b) pick up the heaviest items first, and (c) take the longest route to maximize energy expenditure (d) walk briskly. This health-and-fitness article provides ten tips for exercising that includes these ideas. For the rest of us who are looking to minimize effort, we simply do the opposite:

We sort our shopping list items in decreasing order of expected weight (frozen stuff goes to the bottom too), while also ensuring that the aisles of adjacent items in the list are close to each other, and we are not revisiting aisles or zig-zagging much. Some swapping may be required to find improved sequences. Many of us have probably evolved into efficient shoppers over time, and naturally take these steps and more, but if there an opportunity for improvement that the 'science of better' can uncover for us to make our shopping less stressful, let's take it.

Happy Holidays!

Update 1: December 17, 2013

IBM Smarter Retail article says:

"..... Most recently, Lowe’s has offered the customer a product location functionality that is integrated with their wish list. According to Ronnie Damron, .....Whether customers are browsing the store for ideas or searching for a specific item in a hurry, we think the Product Locator feature will simplify the shopping process, creating a better experience that encourages customers to come back again and again.”

Sunday, June 16, 2013

Traveling Surveyor: Contributions of Mahalanobis to Analytics - 2

Bengal

This post is the second in a series of blogs based on the work done by P. C. Mahalanobis in the area of statistics, analytics, and operations research between 1930-1960. We move north-east from the location of our prior post on storm-flood forecasting (Orissa) to Bengal: a land of science, wisdom, and dharma that gave to the world a Vivekananda whose thoughts deeply affected Gandhi's contribution to India's freedom struggle, who in turn shaped the work of Martin Luther King, Jr., and Nelson Mandela, and thus the civil liberties of a significant population of the world.

Much of discussion here is gleaned from ISI archives, websites, and various papers from Sankhya, ISI's flagship journal. Those interested in a more detailed and accurate analysis of this work are referred directly to ISI's journal material.

Jute

Bengal (including Bangladesh, formerly East Bengal) produces much of the world's jute. India is the largest producer and consumer of Jute today, followed by Bangladesh. Jute is an incredibly useful crop and has been a significant contributor to Bengal's revenue for a long time. Here are some contemporary pictures of standing Jute crop in Bengal.

(pics source: informedfarmers.com)

Why this work is important

Prior to 1947, when India was still occupied by the British Raj (there's a very relevant reason for bringing this up, but we'll get to that later), forecasting the supply of this valuable and lucrative crop in Bengal was largely a product of bad guesswork. Like most other sectors in India, the agricultural sector too is highly decentralized, which means a myriad of tiny farms growing jute and other crops, all of which had to be surveyed if one wanted to get an exact, enumerated production number. Mahalanobis came up with an alternative in the 1930-40s using methods derived from statistics and a field that is now termed 'Operations Research': a scarce-resource optimized method for accurate crop forecasting. Today, the Government of India employs sophisticated remote sensing including a Satellite Survey System to improve crop forecasts, but the methods developed then are still relevant and valuable. The seminal work of Mahalanobis in developing an optimal sample-based survey is also interesting to read from a practitioner's perspective. The combination of ideas employed in the work done in the 1930s include data analysis, statistical modeling, pilot study, scarce resource allocation, and mathematical optimization, and ranks among the great achievements in the practice of Operations Research and Analytics.

A map of undivided Bengal, circa 1850 C. E. (source: http://jrahman.files.wordpress.com)

Motivation

Jute and cotton were two of the most important exports out of India after the manufacturing sectors was crippled by the British Raj - 24% of the total revenue between 1927-37 was from Jute. Estimating the total Jute produce in Bengal up until the 1940s was largely a product of guesswork and ad-hoc estimates provided by the administrative chain of the British Raj produced wildly varying numbers. Like other parts of India, cultivation in Bengal was decentralized and spread over nearly 100 Million small farms, which were on average less than half-an-acre in area, spread over more than 60, 000 sq. miles. Jute was grown in a subset of these farms. Furthermore, the cultivation lifecycle of Jute is very short - about two months from planting to harvesting. Consequently, even if the administration was willing to cough up the expenses for an enumeration survey, covering all these farms within 8-9 weeks would be extremely expensive, if not impossible. Add to the fact, that many plots (30%) that cultivated Jute also cultivated other crops in parallel. Thus, while in theory, we can expect a total enumeration to give us near-zero error, in practice, allotting multi-crop areas to Jute and other Human-induced errors would introduce noise. In fact, the report states that the biggest negative associated with an enumerative survey was not the prohibitive cost but its unreliability, and this motivated Mahalanobis to develop and implement an alternative approach that accomplished the task at a fraction of the cost and time, and at a higher level of accuracy using random sampling.

Random Sampling

The nearly 100M jute farms were spread over Bengal in a non-homogeneous manner. Some areas were densely cultivated, some sparsely. The approach was to partition the total area into zones, i = 1, ..., n (area A_i) whose area was internally homogeneous (kinda like the way finite element analysis is used in structural engineering). Within each zone, a number of areas or grids were selected and sampled at random. If a sufficient number of such grids were sampled, the average proportion of area under Jute within a zone (J_i) can be obtained, which allows us to predict the total area under Jute = sum(i) A_i * J_i.

Decision variables

1. The partition of the total area into approximately homogeneous zones

2. The number of random samples within a zone

3. The area of a sample

For simplicity, we assume that the first decision of partitioning the area within Bengal is an external input and thus our focus is on optimizing the remaining two decisions.

Constraints

1. The cost of the whole operation depends on the second and third set of decision variables. For a given budget, if the area of an individual sample is large, then the number of samples has to be reduced, and thus the samples would be more spread out and further away from each other.

2. The achievable precision (variance) varies similarly. If the sample area is large, the per-sample variance is smaller, but cost considerations limited the number of such large-samples, and this can hurt the overall variance accumulated across the zone. On the other hand, a smaller area in tandem with a larger number of such small-samples affects precision in an opposite manner.

Nonlinear Optimization Problem

Given either cost or precision as a hard constraint, select the sampling area and the number of random samples to maximize precision, or minimize cost.

Mahalanobis' approach attempts to model the change in variance and cost as continuous functions of the two decision variable sets. Once these functions are at hand, a local optimum is obtained using a derivative based Lagrange-multiplier method. Mahalanobis used this approach to tabulate the achievable precision for a range of cost levels.

An exploratory, small-scale (pilot) survey was initially conducted at a small expense as a proof-of-concept and proof-of-technology validation of the methodology prior to embarking on a full-scale project. This type of an approach is now widely adopted in many business analytics projects.

The effect of the decision variables on variance can be calculated relying on theoretical methods. However, human-induced errors were also common, and Mahalanobis used the idea of interpenetrating half-sample pairs, where two groups independently arrived at Jute area estimates for a given location. There are many important details here that are left out for brevity. The cost calculation is detailed and empirical and depends on the nature of the survey, and among things, include:

a. cost of staying and surveying at a given site - this depends on the size of the sampled area and time spent

b. cost of traveling from sample to sample - this depends on the distances between the chosen samples and the sequence of visiting.

Again, we have left out a humongous amount of cost calculations that were done. Reading the reports that came out of this work, one is amazed by the time and effort devoted to meticulously tabulating the various costs that go beyond 'ball-park' estimates, to produce an accurate cost function. For example cost calculation (b) depends on the solution to the corresponding traveling salesman problem.

The TSP

One of the many reports that came out of this this project notes:

(source: Sankhya journal, 1940)

This cost calculation is reviewed by Applegate, Bixby, et al. in their book on TSP and in Bill Cook's 'In Pursuit of the Traveling Salesman'. A literature review of this TSP in these books mention that researchers later showed that the expected length of the optimal tour was approximately between (0.707, 1.27) times the square root of the number of samples visited in a unit square, so Mahalanobis' 1930s estimate was a remarkably good choice.

Results and Business Impact

The cost- and precision-controlled random sampling approach proved to be revolutionary. It achieved greater precision at a fraction of the cost. Specifically, the margin of error was +/- 2%, and the cost was 1/15 of an enumeration census that was performed the same year and found to be less accurate compared to the random sampling approach. Thus the benefit and return-on-investment of this analytical approach was successfully demonstrated in practice, which received widespread recognition and was later embraced by the Government of independent India for many nationwide surveys.

Prelude to Part-3: The Bengal Holocaust

Within a couple years of the successful demonstration and publication of this work, Mahalanobis' Bengal lost between 2-6 million people due to starvation and disease between 1942-1945, triggered in part possibly by a failure of rice crop. The British Raj, locked in an grim Atlantic battle during WW2, may have suppressed reports and figures. It appears that most of the world, and even a vast majority of Indians, to this day, remain unaware of the reality behind this event. How to obtain a reasonable estimate of casualties due to this disaster? Who was responsible and how? A recent book has brought this controversy into the open, and it appears that Mahalanobis (and his statistical sampling method) may have played a critical part in solving this puzzle.

To be continued.

This post is the second in a series of blogs based on the work done by P. C. Mahalanobis in the area of statistics, analytics, and operations research between 1930-1960. We move north-east from the location of our prior post on storm-flood forecasting (Orissa) to Bengal: a land of science, wisdom, and dharma that gave to the world a Vivekananda whose thoughts deeply affected Gandhi's contribution to India's freedom struggle, who in turn shaped the work of Martin Luther King, Jr., and Nelson Mandela, and thus the civil liberties of a significant population of the world.

Much of discussion here is gleaned from ISI archives, websites, and various papers from Sankhya, ISI's flagship journal. Those interested in a more detailed and accurate analysis of this work are referred directly to ISI's journal material.

Jute

Bengal (including Bangladesh, formerly East Bengal) produces much of the world's jute. India is the largest producer and consumer of Jute today, followed by Bangladesh. Jute is an incredibly useful crop and has been a significant contributor to Bengal's revenue for a long time. Here are some contemporary pictures of standing Jute crop in Bengal.

(pics source: informedfarmers.com)

Why this work is important

Prior to 1947, when India was still occupied by the British Raj (there's a very relevant reason for bringing this up, but we'll get to that later), forecasting the supply of this valuable and lucrative crop in Bengal was largely a product of bad guesswork. Like most other sectors in India, the agricultural sector too is highly decentralized, which means a myriad of tiny farms growing jute and other crops, all of which had to be surveyed if one wanted to get an exact, enumerated production number. Mahalanobis came up with an alternative in the 1930-40s using methods derived from statistics and a field that is now termed 'Operations Research': a scarce-resource optimized method for accurate crop forecasting. Today, the Government of India employs sophisticated remote sensing including a Satellite Survey System to improve crop forecasts, but the methods developed then are still relevant and valuable. The seminal work of Mahalanobis in developing an optimal sample-based survey is also interesting to read from a practitioner's perspective. The combination of ideas employed in the work done in the 1930s include data analysis, statistical modeling, pilot study, scarce resource allocation, and mathematical optimization, and ranks among the great achievements in the practice of Operations Research and Analytics.

A map of undivided Bengal, circa 1850 C. E. (source: http://jrahman.files.wordpress.com)

Motivation

Jute and cotton were two of the most important exports out of India after the manufacturing sectors was crippled by the British Raj - 24% of the total revenue between 1927-37 was from Jute. Estimating the total Jute produce in Bengal up until the 1940s was largely a product of guesswork and ad-hoc estimates provided by the administrative chain of the British Raj produced wildly varying numbers. Like other parts of India, cultivation in Bengal was decentralized and spread over nearly 100 Million small farms, which were on average less than half-an-acre in area, spread over more than 60, 000 sq. miles. Jute was grown in a subset of these farms. Furthermore, the cultivation lifecycle of Jute is very short - about two months from planting to harvesting. Consequently, even if the administration was willing to cough up the expenses for an enumeration survey, covering all these farms within 8-9 weeks would be extremely expensive, if not impossible. Add to the fact, that many plots (30%) that cultivated Jute also cultivated other crops in parallel. Thus, while in theory, we can expect a total enumeration to give us near-zero error, in practice, allotting multi-crop areas to Jute and other Human-induced errors would introduce noise. In fact, the report states that the biggest negative associated with an enumerative survey was not the prohibitive cost but its unreliability, and this motivated Mahalanobis to develop and implement an alternative approach that accomplished the task at a fraction of the cost and time, and at a higher level of accuracy using random sampling.

Random Sampling

The nearly 100M jute farms were spread over Bengal in a non-homogeneous manner. Some areas were densely cultivated, some sparsely. The approach was to partition the total area into zones, i = 1, ..., n (area A_i) whose area was internally homogeneous (kinda like the way finite element analysis is used in structural engineering). Within each zone, a number of areas or grids were selected and sampled at random. If a sufficient number of such grids were sampled, the average proportion of area under Jute within a zone (J_i) can be obtained, which allows us to predict the total area under Jute = sum(i) A_i * J_i.

Decision variables

1. The partition of the total area into approximately homogeneous zones

2. The number of random samples within a zone

3. The area of a sample

For simplicity, we assume that the first decision of partitioning the area within Bengal is an external input and thus our focus is on optimizing the remaining two decisions.

Constraints

1. The cost of the whole operation depends on the second and third set of decision variables. For a given budget, if the area of an individual sample is large, then the number of samples has to be reduced, and thus the samples would be more spread out and further away from each other.

2. The achievable precision (variance) varies similarly. If the sample area is large, the per-sample variance is smaller, but cost considerations limited the number of such large-samples, and this can hurt the overall variance accumulated across the zone. On the other hand, a smaller area in tandem with a larger number of such small-samples affects precision in an opposite manner.

Nonlinear Optimization Problem

Given either cost or precision as a hard constraint, select the sampling area and the number of random samples to maximize precision, or minimize cost.

Mahalanobis' approach attempts to model the change in variance and cost as continuous functions of the two decision variable sets. Once these functions are at hand, a local optimum is obtained using a derivative based Lagrange-multiplier method. Mahalanobis used this approach to tabulate the achievable precision for a range of cost levels.

An exploratory, small-scale (pilot) survey was initially conducted at a small expense as a proof-of-concept and proof-of-technology validation of the methodology prior to embarking on a full-scale project. This type of an approach is now widely adopted in many business analytics projects.

The effect of the decision variables on variance can be calculated relying on theoretical methods. However, human-induced errors were also common, and Mahalanobis used the idea of interpenetrating half-sample pairs, where two groups independently arrived at Jute area estimates for a given location. There are many important details here that are left out for brevity. The cost calculation is detailed and empirical and depends on the nature of the survey, and among things, include:

a. cost of staying and surveying at a given site - this depends on the size of the sampled area and time spent

b. cost of traveling from sample to sample - this depends on the distances between the chosen samples and the sequence of visiting.

Again, we have left out a humongous amount of cost calculations that were done. Reading the reports that came out of this work, one is amazed by the time and effort devoted to meticulously tabulating the various costs that go beyond 'ball-park' estimates, to produce an accurate cost function. For example cost calculation (b) depends on the solution to the corresponding traveling salesman problem.

The TSP

One of the many reports that came out of this this project notes:

This cost calculation is reviewed by Applegate, Bixby, et al. in their book on TSP and in Bill Cook's 'In Pursuit of the Traveling Salesman'. A literature review of this TSP in these books mention that researchers later showed that the expected length of the optimal tour was approximately between (0.707, 1.27) times the square root of the number of samples visited in a unit square, so Mahalanobis' 1930s estimate was a remarkably good choice.

Results and Business Impact

The cost- and precision-controlled random sampling approach proved to be revolutionary. It achieved greater precision at a fraction of the cost. Specifically, the margin of error was +/- 2%, and the cost was 1/15 of an enumeration census that was performed the same year and found to be less accurate compared to the random sampling approach. Thus the benefit and return-on-investment of this analytical approach was successfully demonstrated in practice, which received widespread recognition and was later embraced by the Government of independent India for many nationwide surveys.

Prelude to Part-3: The Bengal Holocaust

Within a couple years of the successful demonstration and publication of this work, Mahalanobis' Bengal lost between 2-6 million people due to starvation and disease between 1942-1945, triggered in part possibly by a failure of rice crop. The British Raj, locked in an grim Atlantic battle during WW2, may have suppressed reports and figures. It appears that most of the world, and even a vast majority of Indians, to this day, remain unaware of the reality behind this event. How to obtain a reasonable estimate of casualties due to this disaster? Who was responsible and how? A recent book has brought this controversy into the open, and it appears that Mahalanobis (and his statistical sampling method) may have played a critical part in solving this puzzle.

To be continued.

Monday, May 20, 2013

Solve TRP, Drive Tesla

So the awesome and hyped Tesla Model S is beating Mercedes, BMW, and Audi in sales despite the $70K price tag. One problem is the current lack of charging stations required to recharge over long trips. CNNMoney reports:

"CNNMoney took a Tesla Model S on a test drive from Washington D.C. to Boston. The all-electric car drove 450 miles with two stops to recharge at Tesla's Supercharger stations in Delaware and Connecticut"

A second problem is that unlike conventional gasoline cars that can be refueled very quickly, charging can take a relatively long time, and adds significantly to your total travel time. In the near future, as the number of Teslas increase, the number of charging stations is likely to go up too. However, it will remain a sparse resource for some time. A future Tesla trip from New York to California will require many recharging stops, reminiscent of the old stagecoach problem during the Gold Rush era, which is used to illustrate the principles of Dynamic Programming.

Update: May30, 2013

Popular Mechanics reports:

"...the California-based EV automaker plans to expand its fast-charging "supercharger" network across the continental U.S. During today's announcement, Musk said New York to Los Angeles trips would be viable as soon as this winter.

"When you get in a car you have the ability to go almost anywhere," Musk said. "That's what the supercharger network will do."

The rollout will begin as soon as this summer, with the number of stations tripling to 27 nationwide by the end of June. In addition to increasing supercharger density along the already-established California and Mid-Atlantic coasts, Tesla will debut chargers in a handful of new metropolitan areas within the next few weeks, including Austin/Houston, Portland/Seattle, Boulder/Denver, and Chicago/Milwaukee. By the end of the year, Musk expects to to have "a couple hundred" superchargers spread across the U.S. and southern Canada, with as little as 80 to 90 miles between stations along the Altantic and Pacific coasts..."

Tesla Routing Problem

Given a road network G having a limited number of charging stations located at specific nodes, the Tesla Routing Problem (TRP) will have to:

Find the shortest feasible time path from origin O to Destination D in network G.

Feasibility requires the car to visit enough charging stations in a timely manner. Optimality requires that the sequence of visits be carefully selected such that the total time spent is kept to a minimum.

Total travel time = driving time + recharge time

This involves two decisions:

D1. Which recharging stations to visit en-route?

D2. How long to recharge at every pit stop?

Assumptions:

A1. The longer you recharge, the longer your available range (to a limit).

A2. All recharging stations are similar, and infinite capacity (no queuing!)

A3. Range is deterministic

Update on A2: The Tesla can charge on any outlet, but the time to recharge can vary depending on the source.

Clearly, optimally solving TRP involves the finding a solution to a Traveling Salesman Problem even in the special case of instantaneous recharge (Y=0).

(Updated September 2013: As mentioned in the comments, the general case is not a TSP in the network of charging stations. Here, the assumption is that stations are sparsely located and we visit each one along the way. The title is changed to reflect this distinction).

This is no different from the (milder) challenge faced by conventional car drivers in unfamiliar areas where gas stations may be hard to come by (see this old post). Charge cannot be carried in Jerry-cans. The shortage of charging stations does make every long Tesla trip a non-trivial problem to manage. Tesla owners have to deal with a NP-Hard Optimization Problem (Heh) on every such trip, but in practice, it should not be difficult to find good quality, feasible TRP solutions.

The recharge time makes the TRP a bit more interesting. If two stations are close to each other, we don't have to recharge fully, and just need the minimum to reach the next stop - or perhaps we recharge fully, which allows us to reach a station that is further away but turns out to be more time-effective, overall. Clearly, these two decisions appear to be interlinked and may need to be jointly optimized. D1 is a binary decision variable X(i) - we either visit or don't visit a station at node X(i), whereas D2 is a semi-continuous variable Y(i) conditional on D1:

Y(i) ≤ U.X(i)

where U = maximum recharge time.

Another interesting and maybe even useful problem for Tesla-makers to solve is where to add new charging stations on the network G over time. What is the minimum number and location of stations required to guarantee feasible solutions to any TRP in the 48 states of continental US? How will stations deal with congestion in the future? and so on ...

I haven't driven a Tesla yet but I wouldn't mind relocating my office to inside one and telecommute.

"CNNMoney took a Tesla Model S on a test drive from Washington D.C. to Boston. The all-electric car drove 450 miles with two stops to recharge at Tesla's Supercharger stations in Delaware and Connecticut"

A second problem is that unlike conventional gasoline cars that can be refueled very quickly, charging can take a relatively long time, and adds significantly to your total travel time. In the near future, as the number of Teslas increase, the number of charging stations is likely to go up too. However, it will remain a sparse resource for some time. A future Tesla trip from New York to California will require many recharging stops, reminiscent of the old stagecoach problem during the Gold Rush era, which is used to illustrate the principles of Dynamic Programming.

Update: May30, 2013

Popular Mechanics reports:

"...the California-based EV automaker plans to expand its fast-charging "supercharger" network across the continental U.S. During today's announcement, Musk said New York to Los Angeles trips would be viable as soon as this winter.

"When you get in a car you have the ability to go almost anywhere," Musk said. "That's what the supercharger network will do."

The rollout will begin as soon as this summer, with the number of stations tripling to 27 nationwide by the end of June. In addition to increasing supercharger density along the already-established California and Mid-Atlantic coasts, Tesla will debut chargers in a handful of new metropolitan areas within the next few weeks, including Austin/Houston, Portland/Seattle, Boulder/Denver, and Chicago/Milwaukee. By the end of the year, Musk expects to to have "a couple hundred" superchargers spread across the U.S. and southern Canada, with as little as 80 to 90 miles between stations along the Altantic and Pacific coasts..."

Tesla Routing Problem

Given a road network G having a limited number of charging stations located at specific nodes, the Tesla Routing Problem (TRP) will have to:

Find the shortest feasible time path from origin O to Destination D in network G.

Feasibility requires the car to visit enough charging stations in a timely manner. Optimality requires that the sequence of visits be carefully selected such that the total time spent is kept to a minimum.

Total travel time = driving time + recharge time

D1. Which recharging stations to visit en-route?

D2. How long to recharge at every pit stop?

Assumptions:

A1. The longer you recharge, the longer your available range (to a limit).

A2. All recharging stations are similar, and infinite capacity (no queuing!)

A3. Range is deterministic

Update on A2: The Tesla can charge on any outlet, but the time to recharge can vary depending on the source.

Clearly, optimally solving TRP involves the finding a solution to a Traveling Salesman Problem even in the special case of instantaneous recharge (Y=0).

(Updated September 2013: As mentioned in the comments, the general case is not a TSP in the network of charging stations. Here, the assumption is that stations are sparsely located and we visit each one along the way. The title is changed to reflect this distinction).

This is no different from the (milder) challenge faced by conventional car drivers in unfamiliar areas where gas stations may be hard to come by (see this old post). Charge cannot be carried in Jerry-cans. The shortage of charging stations does make every long Tesla trip a non-trivial problem to manage. Tesla owners have to deal with a NP-Hard Optimization Problem (Heh) on every such trip, but in practice, it should not be difficult to find good quality, feasible TRP solutions.

The recharge time makes the TRP a bit more interesting. If two stations are close to each other, we don't have to recharge fully, and just need the minimum to reach the next stop - or perhaps we recharge fully, which allows us to reach a station that is further away but turns out to be more time-effective, overall. Clearly, these two decisions appear to be interlinked and may need to be jointly optimized. D1 is a binary decision variable X(i) - we either visit or don't visit a station at node X(i), whereas D2 is a semi-continuous variable Y(i) conditional on D1:

Y(i) ≤ U.X(i)

where U = maximum recharge time.

Another interesting and maybe even useful problem for Tesla-makers to solve is where to add new charging stations on the network G over time. What is the minimum number and location of stations required to guarantee feasible solutions to any TRP in the 48 states of continental US? How will stations deal with congestion in the future? and so on ...

I haven't driven a Tesla yet but I wouldn't mind relocating my office to inside one and telecommute.

Sunday, April 14, 2013

Optimally Managing Ladybugs

My daughter loves ladybugs. However, when it comes to cleverness, many have noted how bees and ants (which seem to have a special place in Indian hearts :) "solve" traveling salesman and shortest path instances that matter to them (see this interesting link as well). In contrast, a ladybug's decision analytics appears to be somewhat shaky. They don't seem to have the kind of auto-GPS of bees and ants (at least in the context of this post), and seem to get confused and stray from their optimal path.

(pic source)

Recently, a number of LBs invaded my residence, and I spent a lot of time trying to shoo them away and determine how they got inside in the first place so that I could block their entry. Then, I came across this website. Apparently, ladybugs prefer humidity and warmth and try to enter homes as winter begins. OK. However, a good number also enter the house come spring time, which was puzzling. It seems some of the LBs goof up. Ladybugs roosting under windows outside the house, with a certain probability, enter the house instead of enjoying spring time outside. Once inside, they suffer from dehydration and die (unless they figure out the route to the bathroom or kitchen). If we try to force them out, they get stressed and shed 'yellow blood' that can stain walls. The optimal ladybug solution for this time of the year is to simply open the windows, so the ones who came inside by mistake can leave. It's a win-win for both parties, and it actually worked. On the other hand, during winter, we have to try and provide a cozy, alternative home in backyard and insulate the house well to keep them out.

(pic source)

Recently, a number of LBs invaded my residence, and I spent a lot of time trying to shoo them away and determine how they got inside in the first place so that I could block their entry. Then, I came across this website. Apparently, ladybugs prefer humidity and warmth and try to enter homes as winter begins. OK. However, a good number also enter the house come spring time, which was puzzling. It seems some of the LBs goof up. Ladybugs roosting under windows outside the house, with a certain probability, enter the house instead of enjoying spring time outside. Once inside, they suffer from dehydration and die (unless they figure out the route to the bathroom or kitchen). If we try to force them out, they get stressed and shed 'yellow blood' that can stain walls. The optimal ladybug solution for this time of the year is to simply open the windows, so the ones who came inside by mistake can leave. It's a win-win for both parties, and it actually worked. On the other hand, during winter, we have to try and provide a cozy, alternative home in backyard and insulate the house well to keep them out.

Wednesday, May 2, 2012

Optimal Shoelacing

There are gazillion alternative ways to tie your shoelaces of which only a few patterns are easy to remember and possess nice symmetry.

My daughter got her first laced shoes a few weeks ago. As she took it out of the box, the first noticeable thing was that the slack required to tie the laces appeared to be on the shorter side. This posed difficulties for my daughter while she was learning to make those neat knots. Rewiring the shoes resulted in quite a bit of slack, which resulted in big knots that not only helped her learn quickly, but also seemed irresistible to her kindergarten classmates who kept tugging at it. So the aim was to find a lacing pattern that would generate just the right amount of slack.

This is of course an instance of a Traveling Salesman Problem (TSP). Each lace hole represents a city which can be visited exactly once. For example, the slack is maximized by finding the shortest tour, while the slack can be minimized by finding the longest Hamiltonian circuit. Of course, my daughter would prefer finding a good balance between these two extreme solutions, while also ensuring that tightening and loosening the laces are relatively easy to perform. The former objective can be equivalently specified in terms of minimizing deviation from a desired tour length, while the latter requirement can perhaps be approximated by eliminating unfavorable connection patterns and reducing overall friction.

Any OR person will tell you this: just because the TSP is a notorious NP-Hard problem, it does not automatically mean that practical instances are terribly difficult to manage. On the contrary, OR methods excel in quickly finding amazingly (and provably) good answers to practical instances of underlying TSP substructures within decision problems across a variety of industries.

My daughter got her first laced shoes a few weeks ago. As she took it out of the box, the first noticeable thing was that the slack required to tie the laces appeared to be on the shorter side. This posed difficulties for my daughter while she was learning to make those neat knots. Rewiring the shoes resulted in quite a bit of slack, which resulted in big knots that not only helped her learn quickly, but also seemed irresistible to her kindergarten classmates who kept tugging at it. So the aim was to find a lacing pattern that would generate just the right amount of slack.

This is of course an instance of a Traveling Salesman Problem (TSP). Each lace hole represents a city which can be visited exactly once. For example, the slack is maximized by finding the shortest tour, while the slack can be minimized by finding the longest Hamiltonian circuit. Of course, my daughter would prefer finding a good balance between these two extreme solutions, while also ensuring that tightening and loosening the laces are relatively easy to perform. The former objective can be equivalently specified in terms of minimizing deviation from a desired tour length, while the latter requirement can perhaps be approximated by eliminating unfavorable connection patterns and reducing overall friction.

Any OR person will tell you this: just because the TSP is a notorious NP-Hard problem, it does not automatically mean that practical instances are terribly difficult to manage. On the contrary, OR methods excel in quickly finding amazingly (and provably) good answers to practical instances of underlying TSP substructures within decision problems across a variety of industries.

Thursday, March 1, 2012

House Hunting Efficiently

One of the consequences of the kind of convergence shown in this graph was that it created the need to buy a house. It's become a ritual to spend weekdays creating a list of houses that are feasible with respect to hard constraints (big kitchen, level lot, ..), and then converting that into a prioritized list based on how they score on soft constraints (pre-wired for Bose speakers, for example) that in turn motivates a preferred way of visiting these houses during weekends. I noticed that I rarely see each house in isolation and my view of a house tends to be colored by what I saw earlier. However, of late, the time to view these houses has become a scarce resource, so I created an O.R driven prioritized list that maximizes and optimally allocates viewing time, keeping the total duration equal to the limited time available. I used my automobile GPS unit as the solver.

This GPS unit "solves" the Traveling Salesman Problem (TSP) to figure out the optimal (?) order of visitation that minimizes total drive time, which automatically maximizes aggregate viewing time. (In particular, if houses are located on either side of a busy highway or Main Street, a good heuristic would be ensure that the optimal path intersects such a link infrequently.) I can then allocate the optimal expected viewing time to the houses based on personal preferences. The total viewing time also informs me if I have spread myself too thin, in which case, I can start deleting houses with the lowest scores from the list and re-optimize until the solution looks reasonable.

If viewing order is important from a 'relative comparison' perspective, the resultant constrained TSP problem becomes a bit more harder to solve using a GPS unit. A simple heuristic rule could be to fix the second node ("first house to first") and/or the second-last node ("house to visit last") of the tour and let the others be visited based on time-optimality. If your realtor drives you around, her/his office is the start and end node of the Hamiltonian circuit.

One issue I encountered while using the GPS unit to merely drive-by a house as part of a local neighborhood search (pun unintended) is that I had to get close enough to the house and maybe pause a bit to inform the GPS that this house has been reached. Otherwise, the GPS unit would continually re-route me back to the house, resulting in considerable confusion.

This GPS unit "solves" the Traveling Salesman Problem (TSP) to figure out the optimal (?) order of visitation that minimizes total drive time, which automatically maximizes aggregate viewing time. (In particular, if houses are located on either side of a busy highway or Main Street, a good heuristic would be ensure that the optimal path intersects such a link infrequently.) I can then allocate the optimal expected viewing time to the houses based on personal preferences. The total viewing time also informs me if I have spread myself too thin, in which case, I can start deleting houses with the lowest scores from the list and re-optimize until the solution looks reasonable.

If viewing order is important from a 'relative comparison' perspective, the resultant constrained TSP problem becomes a bit more harder to solve using a GPS unit. A simple heuristic rule could be to fix the second node ("first house to first") and/or the second-last node ("house to visit last") of the tour and let the others be visited based on time-optimality. If your realtor drives you around, her/his office is the start and end node of the Hamiltonian circuit.

One issue I encountered while using the GPS unit to merely drive-by a house as part of a local neighborhood search (pun unintended) is that I had to get close enough to the house and maybe pause a bit to inform the GPS that this house has been reached. Otherwise, the GPS unit would continually re-route me back to the house, resulting in considerable confusion.

Monday, October 25, 2010

Here we go again! Bees and TSP

First the original story today doing the rounds on the Internet on bees solving a TSP while traversing a sequence of flowers for nectar. Their approach resembles the ant-colony / swarm-optimization approach, and while this 'bee story' is truly astounding, the author further states the computers have to explore every possible path to select the best and that takes days. Obviously, they did not hear about Operations Research, and the fantastic work done on inventing efficient algorithms for solving gigantic and difficult TSP problems to provable (near-) global optimality in quick time. The fantastic book on TSP by Dr. Applegate, Dr. Bixby, et al. gives us a fascinating blow-by-blow account of the work on this topic from the TSP past to the present. You can even try out their Concorde solver. The state-of-the-art is truly amazing and based on decades of breakthrough ideas.

Yet, article after article that seem to come out every couple of years assumes that if a problem is NP-Hard, then it is almost "impossible to solve efficiently in practice" and enumeration is the only way. Nope. A pure application of software programming and hardware strategies certainly does not work or scale. OR leads the way.

An incredible amount of success in OR practice has come via successfully tackling precisely such large-scale and ultra-complex NP-Hard problems. Large-scale TSP-structured problems have been routinely solved in Supply-chain planning, vehicle routing, aircraft routing, etc. Not only are problems solved well and quickly, but we also know how well and how much improvement is still possible. These small optimality gaps matter. A TSP solution that is 1% closer to optimality may save a company another million dollars.

Heuristics of unknown quality based on bees and ants are nice, but they don't really tell you if there is another solution that could your save your company another 10%. And if you were to change your input parameters, it may return an entirely different solution that makes such approaches largely unpractical for use within products. And adding a constraint the prohibits certain paths may well make such approaches useless. This Tab has discussed the practical problems with using such heuristics in previous posts here, and you will find more in Dr. Trick's OR Blog when TSP was "solved" last year by creatures with even smaller brains, i.e. bacteria :-)

There's even TSP art. Check this out as well. Let's give O.R. and humans some credit please!

Yet, article after article that seem to come out every couple of years assumes that if a problem is NP-Hard, then it is almost "impossible to solve efficiently in practice" and enumeration is the only way. Nope. A pure application of software programming and hardware strategies certainly does not work or scale. OR leads the way.

An incredible amount of success in OR practice has come via successfully tackling precisely such large-scale and ultra-complex NP-Hard problems. Large-scale TSP-structured problems have been routinely solved in Supply-chain planning, vehicle routing, aircraft routing, etc. Not only are problems solved well and quickly, but we also know how well and how much improvement is still possible. These small optimality gaps matter. A TSP solution that is 1% closer to optimality may save a company another million dollars.

Heuristics of unknown quality based on bees and ants are nice, but they don't really tell you if there is another solution that could your save your company another 10%. And if you were to change your input parameters, it may return an entirely different solution that makes such approaches largely unpractical for use within products. And adding a constraint the prohibits certain paths may well make such approaches useless. This Tab has discussed the practical problems with using such heuristics in previous posts here, and you will find more in Dr. Trick's OR Blog when TSP was "solved" last year by creatures with even smaller brains, i.e. bacteria :-)

There's even TSP art. Check this out as well. Let's give O.R. and humans some credit please!

Monday, July 26, 2010

Analytics in Acadia

Living close to the Acadia national park has turned me into a local tourist guide for friends and relatives who consider any visit to Maine incomplete unless they spend a day there. By applying OR and data analysis, I've kinda figured out the right days to visit Bar Harbor in summer and escape the tourist crowd. On days having a very high bliss factor, an OR person can relax there and think about the naturally elegant analytics hidden all around.

The main scenic route (the park loop road) is a nice 'hard-coded' feasible solution to the TSP that requires us to touch all the popular spots in the park while constraining the grade in the resultant road to be within a safe level, while also reducing the amount of earth to be moved. Of course, too short a path is not very enjoyable, while too long a path will find tourists driving relatively fast to skip the repetitiveness, so the total length of the Hamiltonian circuit should ideally be within some desired bound.

As we think about path lengths, there is an equally interesting observation one can make about the beautiful Maine coastline while driving along the circuit - it certainly looks long. Now, if you look at the map of the US, the California coast seems much longer in comparison. However, if you take into account the bays and undulations and increase the level of detail, the Maine coastline is comfortably longer - the fractal coast length of Maine is more than 2000 miles long (or so i heard). The fractal dimension of the Boothbay, ME coastline was calculated to be 1.27.

Per Wikipedia, 'Maine' is the only single-syllable state-name and also the only state to share a border with a single US state (New Hampshire), which means that we require just two colors to map this region of the US. We share a border with New Brunswick, CA, whose DOT, along with Remsoft, Inc., were Edelman finalists this year. I attended their presentation at the INFORMS practice conference, and it was a really fine one, and I hope our US DOTs learn from them to ensure that the economic stimulus money is optimally spent.

The main scenic route (the park loop road) is a nice 'hard-coded' feasible solution to the TSP that requires us to touch all the popular spots in the park while constraining the grade in the resultant road to be within a safe level, while also reducing the amount of earth to be moved. Of course, too short a path is not very enjoyable, while too long a path will find tourists driving relatively fast to skip the repetitiveness, so the total length of the Hamiltonian circuit should ideally be within some desired bound.

As we think about path lengths, there is an equally interesting observation one can make about the beautiful Maine coastline while driving along the circuit - it certainly looks long. Now, if you look at the map of the US, the California coast seems much longer in comparison. However, if you take into account the bays and undulations and increase the level of detail, the Maine coastline is comfortably longer - the fractal coast length of Maine is more than 2000 miles long (or so i heard). The fractal dimension of the Boothbay, ME coastline was calculated to be 1.27.

Per Wikipedia, 'Maine' is the only single-syllable state-name and also the only state to share a border with a single US state (New Hampshire), which means that we require just two colors to map this region of the US. We share a border with New Brunswick, CA, whose DOT, along with Remsoft, Inc., were Edelman finalists this year. I attended their presentation at the INFORMS practice conference, and it was a really fine one, and I hope our US DOTs learn from them to ensure that the economic stimulus money is optimally spent.

Monday, April 12, 2010

Doogie, Darwin, Dowry, and the TSP

A couple of teenagers from the U.S. visited the beautiful IIT campus in Madras (Chennai), India in 1989-90. They were not there to attend the popular collegiate cultural festival 'Mardi Gras' as it was known back in those days, but to present a research paper on AIDS. They happened to be brothers, Balamurali Ambati, and Jayakrishna Ambati, who completed medical school at a fairly young age. Per Wikipedia, BA graduated from the Mount Sinai school of medicine at the age of 13, and become a qualified doctor at 17 in 1995.

Today, the Ambani brothers hog the media space in India as they seek to become richer, but for a brief while in the 1990s, the elder Ambati brother got entangled in a 'dowry harassment' scandal. Dowry harassment reports was big news in India, with the per-capita dowry-deaths in line with the number of 'murder for insurance' cases in the US, or the wife-beating cases in Switzerland. Anyway, reports indicate that the case fell apart after the bride's father was recorded on tape trying to extort a few hundred big ones in blackmail money. Unfortunately for the elder brother, it looks like like he had to cool his heels in India until this case was wholly resolved, losing a good two years in the "youngest achiever" race, which has since become an idiotic, even deadly craze in Southern India. This is in contrast with the more comical approach in Northern India and Pakistan, where many kids are 2-5 years older than their official age. If you were that skinny, baby-faced runt in a middle school in Bangalore, he would be that guy with the stubble in the last bench, and the captain of your school's football (soccer) and (field-) hockey teams.

Pardon the digression. Around the time the Ambati brothers visited the IITM campus (A former student reminisces here) to talk about AIDS, they were also the primary authors of this published paper on the traveling salesman problem. The title is exciting, but a tad misleading, in that it hints at a polynomial time algorithm for the NP-Hard TSP. It resembles a randomized heuristic approach based on the theory of natural selection, and appears to possess good computational properties, and has been cited more than once in followup research in this area. On the other hand, I don't think even Doogie did any OR work, real or fictional.

Today, the Ambani brothers hog the media space in India as they seek to become richer, but for a brief while in the 1990s, the elder Ambati brother got entangled in a 'dowry harassment' scandal. Dowry harassment reports was big news in India, with the per-capita dowry-deaths in line with the number of 'murder for insurance' cases in the US, or the wife-beating cases in Switzerland. Anyway, reports indicate that the case fell apart after the bride's father was recorded on tape trying to extort a few hundred big ones in blackmail money. Unfortunately for the elder brother, it looks like like he had to cool his heels in India until this case was wholly resolved, losing a good two years in the "youngest achiever" race, which has since become an idiotic, even deadly craze in Southern India. This is in contrast with the more comical approach in Northern India and Pakistan, where many kids are 2-5 years older than their official age. If you were that skinny, baby-faced runt in a middle school in Bangalore, he would be that guy with the stubble in the last bench, and the captain of your school's football (soccer) and (field-) hockey teams.

Pardon the digression. Around the time the Ambati brothers visited the IITM campus (A former student reminisces here) to talk about AIDS, they were also the primary authors of this published paper on the traveling salesman problem. The title is exciting, but a tad misleading, in that it hints at a polynomial time algorithm for the NP-Hard TSP. It resembles a randomized heuristic approach based on the theory of natural selection, and appears to possess good computational properties, and has been cited more than once in followup research in this area. On the other hand, I don't think even Doogie did any OR work, real or fictional.

Subscribe to:

Comments (Atom)